sEMG Gesture Recognition Using Neural Networks

Passionate about software development and architecture, web and cloud technologies, as well as game development.

My most recent research was about using Smartphones as interaction devices. This time I researched whether an approach using Neural Networks (NNs) to evaluate Surface Electromyography (sEMG) data can be used as a Human-Computer Interaction (HCI) interface. So basically, I wanted to trigger specific actions on a computer by using different hand gestures.

Photo by Akeyodia - Business Coaching Firm on Unsplash

Previous research succeeded with this approach, but the performance (gesture detection accuracy) was not great. Also, the approach worked only on people that trained the NN beforehand. To fix those issues, I used a different approach, based on Transfer Learning (TL), that allows training a huge network with a lot of data from different persons, which is then adjusted to the person whose gestures shall be detected.

Data Collection

Data were captured using the discontinued Myo armband, which uses eight built-in sEMG sensors to capture muscle activity. Three gestures (the rock paper scissors gestures) were recorded by 13 participants in multiple sessions during the study. The data is available online and can be downloaded from here. Some of this data was used (after preprocessing) to train the “base model,” which is based on previous research but was then hyper-parameter tuned to my data.

Base Model

The base model uses LSTM cells in order to understand the data sequences from the sEMG armband. The hyper-parameter tuned architecture and more details on the data collection process can be found in my paper. The base model performed well on persons whose data it was trained with. But it was not capable of classifying data of previously unseen persons. So the network was not able to generalize very well, which is probably due to the fact that each person’s arm is built differently but also due to subtle differences in armband placement.

Transfer Learning

Since the NN learned to classify gestures correctly on the persons it was trained with; we now have to teach it how to adjust to new persons. It already learned the differences between the different people it was trained with. When using it on new persons, it now has to learn what differences to look for (e.g., now a sensor maps to a different muscle due to different muscle structures). This is achieved with the help of Transfer Learning (TL).

Transfer Learning describes a particular process of adapting a trained Neural Network to a different but related problem by leveraging the existing knowledge.

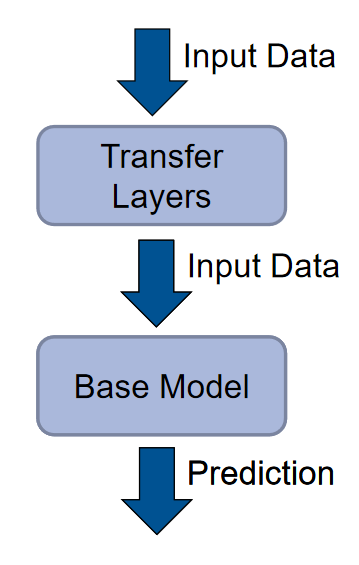

TL model architecture

Instead of feeding the data directly to the base model, as was done previously, it is now fed into a second NN that “translates” the data to the base model. This second NN does not use LSTM cells but rather “regular” dense layers since it does not have to understand the connection between multiple timeframes.

Now before detecting data from previously unseen persons, a little bit of training data from that person has to be collected to train just the transfer layers. But not nearly as much as would be required in order to train a full regular network to detect gestures from that person.

The results looked very promising, and after training the transfer layers, the network performed quite well!

Conclusion

This strategy makes this approach a great strategy to implement sEMG-based HCI interfaces since it requires very little training data and takes very little time to record as well as train the transfer layers. It is quick and easy to use for the end-user, which is crucial to HCI interfaces. The accuracy was quite high, and it was able to perform in real-time. Even though there is a lot more to improve and research on, using TL on LSTM-based NN to classify sEMG data from different, previously unseen persons shows to be a good approach.

If you want to read the full paper, you can check it out here.